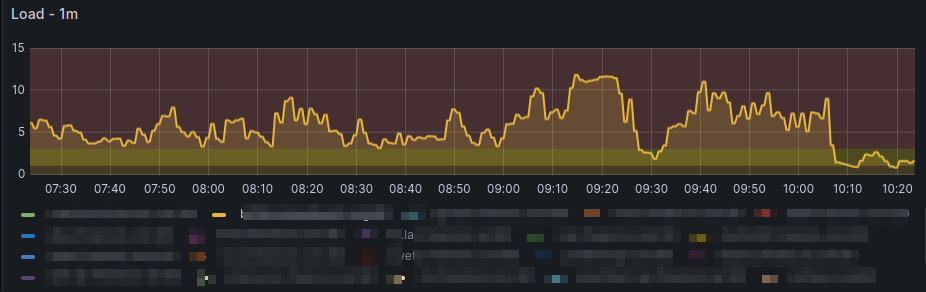

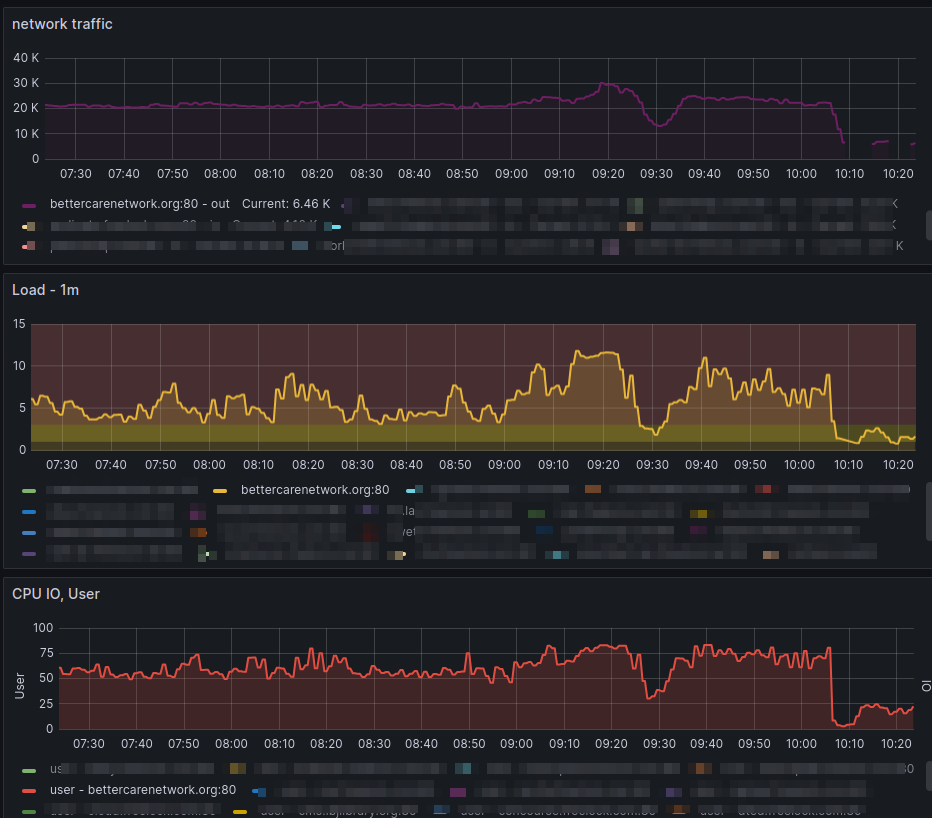

High load isn't necessarily an emergency, but it may be a heads-up before a site noticeably slows down. Sometimes there are weird spikes that just go away, but sometimes this is an indication of a Denial of Service.

Rate Limiting

NGinx has rate limiting that can be used to handle cases where a slow URL is targeted. Today one of our sites had high load alerts. Here's how I handled it:

- Check to see what was using the load. I logged into the server, and ran

top, which immediately showed 7 - 8 PHP processes near the top of the list. - Check the responsible process's status. I went to the PHP status page we have in our Nginx config, and saw that all available processes were in use, and many of them had a /index.php?document-type=... path -- e.g. a search path with a lot of parameters.

- Review the logs. Looking in the Nginx access_log, I found that the /search path was being hit 2 - 3 times per second, from multiple different IP addresses but all sharing the same user agent -- "Bytespider". This is a crawler from ByteDance (the company that owns TikTok).

- Determine a plan. In this case, after figuring out that Bytespider had hit the search page 33K times in the previous 6 hours, and it was also loading all page-related assets, I decided this was not something to block entirely, but a good situation to use a rate limit.

Implementation

A couple searches gave me some good references/instructions on this: https://serverfault.com/questions/639671/nginx-how-to-limit..., https://theawesomegarage.com/blog/limit-bandwidth..., https://www.nginx.com/blog/rate-limiting-nginx/

In our normal Nginx configurations, any .conf file in /etc/nginx/conf.d/*.conf is included in the main "http" block, so I created a "limits.conf" file there for the 'limit_req_zone' directive:

map $http_user_agent $limit_bot {

default "";

~*(Bytespider) $http_user_agent;

}

limit_req_zone $limit_bot zone=bots:10m rate=30r/m;... I had to read up on the map and limit_req_zone to understand this -- a map creates a new variable, and the first arg to limit_req_zone skips applying the zone if the value of the variable is empty. So this map outputs the Bytespider user agent string, or nothing.

The limit_req_zone creates a zone named 'bots', allocating 10 MB of space to track variations (which is probably entirely unnecessary, we only have a single variation here!). And then each variation of the user agent passed in $limit_bot is limited to 30 requests per minute -- 1 every 2 seconds.

To actually apply this, I could add directly to the sites-enabled file for the site, but this does get reset by our configuration management system (Salt Stack) every day. So instead I created another file in /etc/nginx/includes/limit_search.conf . Files in includes need to be explicitly included to take effect, and we have a section in our Salt configuration to do this.

In this file, I applied the actual limit to the search path:

location ~ /search {

limit_req zone=bots burst=4;

limit_req_status 429;

try_files $uri @rewrite;

}... this applies the "bots" limit to the /search path, allowing at most 4 concurrently running instances before it sends an error.

By default it sends a 503 error -- but looking up status codes, I found that 429 is "Too many requests", meant for rate limiting, so I changed the error code to send that.

And finally, this location block was taking over the entire URL -- but doesn't exist on the disk, and so I had to add the try_files handler to get it handled by Drupal/PHP.

And then the only thing left was to add it to the site configuration, using the line:

include includes/limit_search.conf

... and the traffic and load immediately dropped back to normal levels:

Comments

Thank you!

Thanks a bunch for this! This works to rate limit bots across multiple IPs, which is better than the IP approach I was using previously.

You're awesome!

Thanks

Hi, thanks for sharing this tip.

I got to apply rate limiting because recently GPTBot accessed my site more than I do and used up more bandwidth than my typical usage.

Add new comment