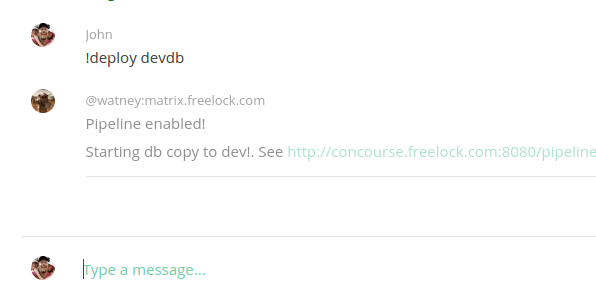

Its name is Watney. Watney lives in Matrix. Watney is a bot I created about 6 months ago to start helping us with various tasks we need to do in our business.

Watney patiently waits for requests in a bunch of chat rooms we use for internal communications about each website we manage, each project we work on. Watney does a bunch of helpful things already, even though it is still really basic -- it fetches login links for us, helps us assemble release notes for each release we do to a production site, reminds us when it's time to do a release, and kicks off various automation jobs.

Over the brief time of its existence, Watney continues to get smarter and do more for us. It listens in a special room for notices from a bunch of our systems, and when it hears something interesting from one of them, it can do some sort of privileged action. For example, when anyone pushes code into our central repository, it puts a message in the special deployment room, and Watney relays that to another system we use to manage all our servers... this makes it so that anyone on our team can change an alias to a site we manage, and within a minute or so that update is pushed out to all the development workstations, so our entire team gets the change and things "just work."

Systems

In the past year, Freelock has come to rely upon a bunch of new software systems I had never even heard of just 1 year ago. How we run all our work has changed pretty drastically over that time. Compared to how we worked just 3 - 4 years ago, what we do today is entirely different.

The goal is always to improve the quality of our work. The typical Drupal site we manage has over a 1/2 million lines of code, and there is a steady stream of security updates that change a bunch of different modules we use -- every now and then a fix to one module breaks another.

One of the biggest milestones towards that goal is getting a couple of entirely different kinds of tests running automatically, for every release. With 30+ sites that we currently work with, the big challenge is coming up with an automation system that can handle all the variations between these sites. If you're managing one site, and have a big team, this is pretty much a solved problem -- you deploy a "Continuous Integration" (CI) server like Jenkins, and set it to orchestrate all the tests you want to run -- but when you have active Drupal 6, 7 and 8 sites, some using "Features", some using Drupal 8's new Configuration Management tools, many not -- getting something that can run some standard tests across the entire set can be extremely difficult.

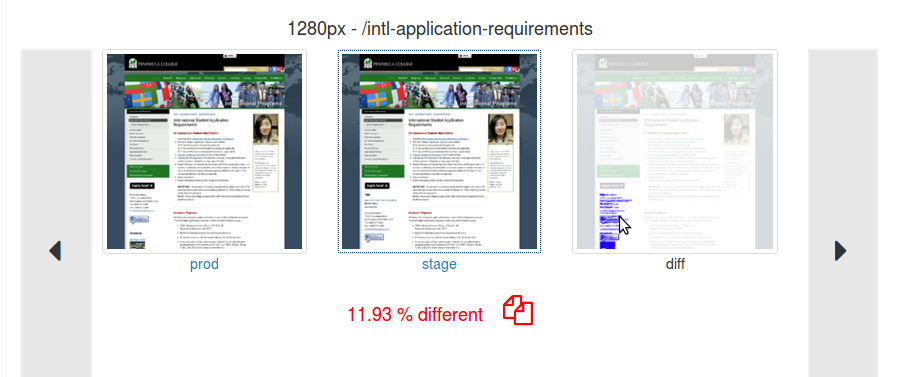

Especially when those tests become specific to the site, and not to the underlying code. We rely upon Drupal.org's unit testing to ensure that the core software operates the way it should -- but this doesn't cover whether the site looks the same after an update.

Continuous Integration

We had a single job set up to run with Jenkins, but basically all it did was attempt to copy the production database to our Stage environment, merge in the new code being released, sanitize the database so it doesn't accidentally spam (or charge users), and let us know when it's done so we could manually run tests. And that was proving very cumbersome to manage, daunting to improve to the level we wanted.

So a couple months ago I looked around for alternatives, and found Concourse. This CI system breaks up the jobs into much smaller units that can be organized into pipelines, flowing one into the next. The model for managing this seemed to be a much better fit for us than the old Jenkins system we had, so I dove into using it to build up our automations. And at the start it seemed to be a huge improvement, and I quickly got some of our more complicated tests kicked off and running, along with a bunch of different deployment jobs -- huge wins!

I turned it on for a few projects. With 2 or 3 projects active, it worked fine, if a bit slow. But when I added a few more, it stopped working entirely.

The Rabbit-Hole

This led me down a rabbit-hole that took over a month to sort out. It turns out, Concourse was proving to be a huge resource-hog.

It works by defining a bunch of "resources" -- things like a particular branch of our git repository, a version number, a location to store test outputs, a notification -- and feeding those in and out of "tasks" that actually do stuff with them. Most of the resources are a git repository of some kind. And by default, Concourse would check every active resource for changes every single minute.

Our early pipeline had 5 - 6 of these git resources -- so when I turned on 6 pipelines I suddenly had 36 resources checking every minute. And I have over 30 of these to enable! And I hadn't even gotten that far on the pipeline getting built -- but we needed more hardware.

I ended up spinning up 7 different servers that act as "Workers", each one on beefier, more expensive cloud hosting than the last. I had to upgrade our main git server too, which couldn't handle all the requests. I dialed back the checking rate for most of these resources to once or twice an hour instead of per minute. I implemented a "pool" locking mechanism to prevent multiple jobs from running at once -- but even with all of these improvements and an expensive, high-powered server I could still only get to about 15 active pipelines before everything ground to a halt and lots of jobs started failing.

To get anything actually done, I had to "pause" most of the pipelines, and just turn on the ones for projects we were working on -- as long as there were only a handful of active pipelines, the system worked great.

I had one other problem -- I was developing a single template of a pipeline, but once that got loaded into Concourse, I had to remember to manually update it if I had changed the template (to run new deployment steps, for example).

The Insight

So there I was, thinking "This Concourse system is great, but I'm not going to be able to afford to run it." I was thinking it would probably take 4 or 5 expensive, active workers to handle the load for all our sites -- but these would sit idle most of the time, aggressively checking for changes that might happen a couple times a week for most of the projects we manage, only to benefit the handful we're actively working on any given day. Expensive to run, puts high demands on other systems we run, all for doing mostly nothing.

Hey. Watney already knows when something interesting happens. And Watney can already trigger jobs in Concourse. What if Watney turns on a pipeline when something interesting happens, and turns it off an hour later?

I checked some assumptions with Concourse -- jobs don't get interrupted when pipelines get paused, resources continue to get cached as usual when a pipeline is paused -- and I couldn't find any downside to this approach.

I implemented it that evening, taught Watney to activate AND update the pipeline whenever the git server reported a new commit on one of three managed branches... And overnight we had it running across ALL our sites!

The result

Today we have a full deployment pipeline that sanitizes and imports production databases to our stage environment, kicks off Behavior Driven Design and Visual Regression Tests for every release, deploys code and configurations to stage and production environments, manages a site verion number, and delivers test results to Matrix. It already handles a lot of variations of our sites -- it can grab databases from sites we manage on Pantheon and Acquia as well as self-hosted servers.

Now we get to focus on what counts -- what the site actually does, and the tests that verify it works. Now we can define tests specific to each site, and verify that those continue to work with each release.

Through automation, we're able to manage a lot more sites with fewer people, and deliver a better result. For the past 2 years we've been steadily automating a lot of the work we do, and now it's starting to pay off!

Add new comment